We all know that information about our adversaries and partner countries is and always has been critical to National Security. Before our interconnected world of the internet, information operations were more about human spying, one person to another. As technology has progressed this information criticality has only become more intense, and today has become the most critical component of modern warfare.

We all know that information about our adversaries and partner countries is and always has been critical to National Security. Before our interconnected world of the internet, information operations were more about human spying, one person to another. As technology has progressed this information criticality has only become more intense, and today has become the most critical component of modern warfare.

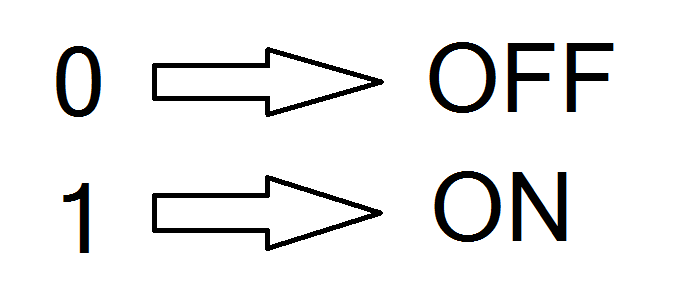

The root of today’s information technology can be traced back to the humble use of the binary numbering system of ones and zeros. Before that, information was locked into the analogue world of sine wave radio frequency communications, decimal numbers, and language specific alphabets.

Using the binary numbering system of 1’s and 0’s, called bits, changed all of that because bits have the revolutionary ability to be processed through electronic circuits that can easily translate an “on” transistor as a 1 bit and an “off” transistor as a 0 bit. It is this simplicity that enables noisy electronic signals to be correctly interpreted as 1’s or 0’s. Combining this electronic simplicity with the invention of integrated circuits now containing billions of transistors in a single chip, has given rise to our modern digital world.

Using the binary numbering system of 1’s and 0’s, called bits, changed all of that because bits have the revolutionary ability to be processed through electronic circuits that can easily translate an “on” transistor as a 1 bit and an “off” transistor as a 0 bit. It is this simplicity that enables noisy electronic signals to be correctly interpreted as 1’s or 0’s. Combining this electronic simplicity with the invention of integrated circuits now containing billions of transistors in a single chip, has given rise to our modern digital world.

Likewise, in wired and wireless communication systems, detection of 1’s and 0’s in transmitted information is much easier than accurately reproducing an analogue sine wave. So the simple idea of binary bits have enabled orders of magnitude greater information to be transmitted within a given frequency band, wire, or glass fiber strand, and stored within a magnetic or electronic medium. As an example undersea fiber optic cables transmit 95% of today’s global information, and a single fiber can now transmit as much as one terabit (10 to the 12 bits per second).

The World is Analogue. Isn’t It?

The net result is our current world, where everything has become represented, stored, moved, processed, and controlled by bits and the machinery that translates bits into our human analogue brains. I used to have a picture of planet earth on my college dorm wall with a caption that said, “The world is analog, isn’t it?”

Humans are the dominant species on our planet because we are superior information sharers. And if we haven’t shared information for some reason, we steal it. As such, warfare as a component of tribal, or national security, has always been a cat and mouse game of getting ahead or behind of one’s adversaries. In the past, tribal or national security has been about obtaining and controlling physical space, by the force of military and/or police control, to enable rule-of-law.

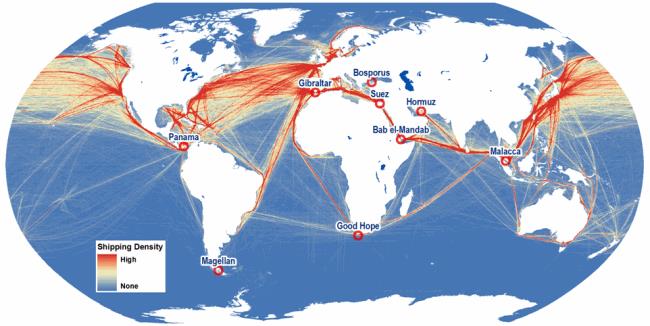

In today’s world, physical space is still critical, but bits now control all of the primary elements of our human lives. By that I mean that bits literally control all of the primary flows on our planet: food, water, money, commodities, manufactured goods, real estate, banking, stocks & bonds, and energy in every form. Therefore, if the right bits don’t get to the right place, at the right time, our planet’s flows are disrupted!

In today’s world, physical space is still critical, but bits now control all of the primary elements of our human lives. By that I mean that bits literally control all of the primary flows on our planet: food, water, money, commodities, manufactured goods, real estate, banking, stocks & bonds, and energy in every form. Therefore, if the right bits don’t get to the right place, at the right time, our planet’s flows are disrupted!

On our multi-thousand-year human timeline, cybersecurity has only been a real thing in the last microsecond. The term cybersecurity was first invented in 1989 when the word cyber began to be added to some words to make them more futuristic and interesting. Cyber came from the 1948 invention of the term cybernetics most famously defined by Norbert Wiener who characterized cybernetics as “the scientific study of control and communication in the animal and the machine”.

As we often hear, the foundation of our modern internet, and all of the compute machinery that makes it possible, was not invented nor designed with a need for cybersecurity. It wasn’t considered important at the time, and the inventors had no idea that DoD’s ARPANET would become the dominant network technology of our planet. But, it was less than twenty years after the beginnings of ARPANET before the first foreign cyber hacking instances against our military began in the early 90s. Not until the late 90’s did the DoD begin to take stronger actions to prevent cyber intrusions. And, the very first DARPA cybersecurity research project was initiated in the late 90s.

In 1999, the futurist Ray Kurzweil, in his book, “The Age of Spiritual Machines,” predicted that by 2009, “The security of computation and communications is the primary focus of the Department of Defense. There is general recognition that the side that can maintain the integrity of computational resources will dominate the battlefield.” Interestingly, the U.S. Cyber Command stood up in mid-2009, and likewise, each of the Military Services stood up operational Cyber Commands in 2009. The Navy rebirthed the name 10th Fleet as it’s Cyber Command (it was originally a special anti-submarine command during WWII).

Kurzweil’s predictions are mostly evolved from Moore’s Law that first predicted in 1975 that the number of transistors in an integrated chip would double every 18-24 months… Following Moore’s Law, it has been predicted that by 2024 a single $1000 chip will have more computing power than the human brain, and by 2045 more power than the human race. That Moore’s Law has held up since 1975, led Kurzweil to embrace the idea in his 2005 book “The Singularity is Near,” that “By the 2040’s, non-biological intelligence will be a billion times more capable than biological intelligence (a.k.a. us).” [Recall from your math classes that a mathematical function singularity is when the function goes to infinity or isn’t well behaved.]

So in our world now dominated by bits and preyed upon by criminal and nation state hackers, where does it all leave our human race? It leaves us in a mess where we can only hope that new technologies and the right human choices will carry us safely forward!

In future posts I will discuss our changing world of cryptocurrency, blockchain contracts, quantum computing, and software 2.0. Could these new technologies outpace the cybersecurity threats that plague our current world?

So should a Cyber Force be stood up as a separate service?

Thanks Jim, a cyber force would more formally acknowledge cyberwarfare, but that doesn’t help our vulnerable infrastructure that provides our planetary flows…

Moore’s Law predicted only a small aspect of our cyber problems. The larger area of concern is the proliferation of digital computing and communications into every aspect of our lives. The Internet of Things has increased cyber attack surfaces in ways not even fully understood by most if not all of us. Small components we put into systems can connect wirelessly in ways we don’t expect. Subsystems can have communications paths we only partially use which can be used against us. Innovative hacking can find weaknesses where we never thought to look.

Thanks Garth, and in the meantime cybersecurity has become its own industry full of too much process supported with too few knowledgeable actors that are always chasing the hack rather than preventing the hacks… why doesn’t every software/system use automated PenTesting to eliminate all of the known hacks on a daily basis rather than facking certifications that mean little in the real world…

In my opinion, we have an 18th century approach to governance in a 21st century technology-driven culture. Not only do our leaders move slowly and protect the status quo, the organs of government resist change and deny obvious threats. Climate change, as an example, has been predicted for 50 years, and no government has yet demonstrated how any country will adapt adequately.

Any system will break when confronted with an exponentially increasing disturbance. We can see how civil society has broken down as citizens respond to the plethora of available information by opting-in to information bubbles that confirm their beliefs and reinforce unproductive behaviors. Capitalism nurtures these self-reinforcing bubbles. The future of that time line is ominous.

False, misleading, and hateful speech damages brains, harms people, and reduces society’s progress. Known poisons should be regulated and eliminated. This will require a change in social perceptions and political evolution. Recent civil suits against perpetrators of big lies give hope that somebody will actually have to pay those harmed by their lies. However, nearly all lying continues unabated, delivered by media platforms that monetize consumer attention.

In the meantime, we at Trusted Origins Corp. are soliciting global participation in the Disinformation Resistance Community (DRC) at resistdisinfo.com On this platform, members can contribute Trusted knowledge and assess Internet articles as Distrusted, and consumers in general can block Distrusted content from Internet searches. The end goal of the DRC is to provide a safe place for citizens to search the Internet and find Trusted answers. This would at least provide a big bubble for people seeking truth.

Thanks Rick, as you point out, it is almost impossible to know which information source is telling truth with unbiased intentions (to my knowledge nothing that starts with humans can have unbiased intentions). You mention climate change as if that is completed science… I have been studying climate change for 15 years. At first I believed all of the hype about run away positive CO2 feedback loops destroying life on our planet… but now I think the cosmic ray/cloud cover theory research leaves us far from a definitive truth on how societies should respond to our planet’s climate given the impact on developing societies but that is a longer discussion. I guess part of the problem is our human mental frailty. Perhaps the Chinese Social Credit System could be a useful solution so long as it couldn’t be biased by a controlling government, religion, or other large biased organization… How about a transparent blockchain social credit system?…

Your final question I believe has been answered and that answer is yes. We need to focus on the broader issue of cybersecurity rather than individual attacks. It is time we recognize the threat and all it poses for our existence because as you correctly noted the 0s and 1s control the flow of services necessary for our lives. Thank you for the stimulating article.

Thanks Rebecca, a follow discussion has to be about how we can transform our vulnerable information in all forms into technologies not so easy to exploit. One such possibility is truly random number encryption keys created using quantum random number generators. This technology exists today. Add to that the blockchain revolution, and we can foresee a future, as discussed by George Gilder in his book, “Life After Google.” That revolution has already started with alternate non-fiat Bitcoin currency, and related blockchain contracts and transactions. It is just starting to get interesting…

This is a good, general intro to the topic. Well written, a bit simplistic, but if it’s going to a very wide and non-technical audience, probably a good thing.

Thanks Robert, it is very simplistic. I gave a talk to a very non technical audience about cybersecurity so used that material as the foundation of this post. What I left out because of length is the discussion about how significant the OPM hack and the recent SolarWinds hack are in the continuing cyberwar escalation between China, Russia, and the U.S.

Good job. Thanks Marv.

Nice article, thanks Marv.

Very well written Marv. I recall my earlier days of presenting to Senior Executives and Flag/General Officers, and being told to express everything with simplicity. Not everyone has the deep technical knowledge like many reading this blog, and if we want to obtain the proverbial “buy-in” from a larger audience, then we must ensure that everyone can understand the environment and threats.

Thanks Bryan, as in all things human understanding complexity is difficult and often ignored as too hard:-)

Cybersecurity enhancements and performance measurement both require executive buy-in and I’m discovering that leaders oftentimes are unaware that they need situational awareness individually and holistically. I would love to help agencies discover the lenses that they need to have in order to support National Security and Infrastructure Security efforts.